Most IT professionals rate the pain of managing encryption keys as severe, according to a new global survey by the Ponemon Institute.

On a scale of 1 to 10, respondents said that the risk and cost associated with managing keys or certificates was 7 or above, and cited unclear ownership of keys as the main reason. “There’s a growing awareness of the security benefits of encryption really accrue from the keys,” said Richard Moulds, vice president of product strategy at Thales e-Security, the sponsor of this report. “The algorithms that encrypt the data are all the same — what makes it secure is the keys.”

MORE ON CSO: What is wrong with this picture? The NEW clean desk test

But as organizations use more encryption, they also end up with more keys, and more varieties of keys.

“In some companies, you might have millions of keys,” he said. “And every day, you generate more keys and they have to be managed and controlled. If the bad guy gets access to the keys, he gets access to the data. And if the keys get lost, you can’t access the data.”

Other factors that contributed to the pain were fragmented and isolated systems, lack of skilled staff, and inadequate management tools. And it’s hurting worse than before. “The proportion of people that rate it as higher levels of perceived pain is higher than last year,” said Moulds.

One reason that pain is increasing could be that encryption is becoming more ubiquitous, he said, embraced by industries and companies new to the challenges of managing keys and certificates.

According to the survey, which is now in its 10th year, the proportion of companies with no encryption strategy has declined from 38 percent in 2005 to 15 percent today. Meanwhile, the share of companies with an encryption strategy applied consistently across the entire enterprise has grown from 15 percent to 36 percent. The biggest growth last year was in healthcare and retail, two sectors hit by major public security breaches.

In the health and pharmaceutical industry, the share of companies with extensive use of encryption jumped from 31 to 40 percent. In retail, it rose from 21 to 26 percent. However, for the first time in the history of the survey, the proportion of the IT budget going to encryption has dropped. Between 2005 and 2013, it climbed steadily from 9.7 percent to 18.2 percent, but dropped to 15.7 percent in this year’s report.

The biggest driver for encryption was compliance, with 64 percent of respondents saying that they used encryption because of privacy or data security regulations or requirements.

Avoiding public disclosure after a data breach occurs was only cited as a driving factor by 9 percent of the respondents. Data residency, in which some countries allow protected data to leave national borders only if it’s encrypted, didn’t even make the list.

“It didn’t rank as high on the list of motivators as you would have thought,” said Moulds. “But data residency is an increasing driver, and I think it’s going to be a big driver in the future.”

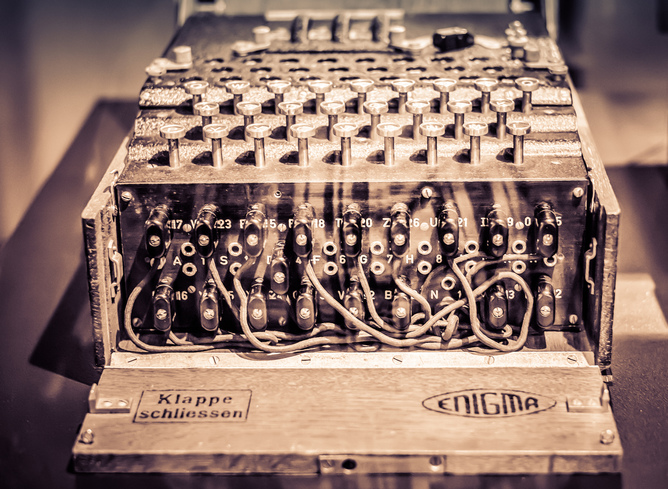

Figure A: Tel Aviv University researchers built this self-contained PITA receiver.

Figure A: Tel Aviv University researchers built this self-contained PITA receiver. Figure B: A spectrogram

Figure B: A spectrogram